My starting point for a workshop I led at UX Cambridge 2012 was being asked whether a usability test can use only a questionnaire with no observation? This presentation – How to find out about the usability of your website using aContinue reading… How to find out about the usability of your website using a survey

Category: Surveys

Do you trust me enough to answer this question? Trust and data quality

Here’s a question for you: what is your social security number? If you’re from the US, you probably thought: “Why should I tell you that?” From elsewhere, you probably thought, “I don’t have one of those. Does it matter?” EitherContinue reading… Do you trust me enough to answer this question? Trust and data quality

Better UX surveys for UCD2012

UCD2012 – the User Centred Design Conference in London – was an initiative organised by the Computer Society Interaction Group (BCS), British Interactive Media Association (BIMA), the Institute of Ergonomics and Human Factors (IEHF), Interaction Design Association (IxDA) and the UserContinue reading… Better UX surveys for UCD2012

How to ask about customer satisfaction in a survey

Surveys often include questions about satisfaction. But what is satisfaction anyway? And are there better ways to ask about it? To measure customer satisfaction, we need to consider the customer’s starting point and the comparisons that drive whatever emotion theContinue reading… How to ask about customer satisfaction in a survey

How to ask better questions and how to assess UX using surveys

These slides are from the first part of a workshop I ran for EBI on user experience surveys. They cover two key topics: how to improve the questions in surveys, and how to assess UX using a survey. Better UXContinue reading… How to ask better questions and how to assess UX using surveys

Ten tips for a better UX survey, Las Vegas 2012

I was delighted to be invited to talk to the User Experience Professionals Association Conference in Las Vegas in June. This presentation offers tips on writing better questions, using rating scales well, improving the whole survey process, and testing, testing,Continue reading… Ten tips for a better UX survey, Las Vegas 2012

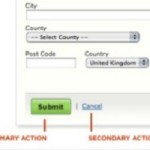

Buttons on forms and surveys: a look at some research

Where to put the buttons on forms? There seem to be endless discussions: Does ‘submit’ or ‘send’ or ‘OK’ go to the left or right of ‘cancel’? Does ‘next’ go to the left or right of ‘previous’? My views are:Continue reading… Buttons on forms and surveys: a look at some research

Three reasons why response from panels may not be what you want

What might turn an honest, happy respondent into a despondent cheat? I’m a dedicated survey respondent. I have lots of reasons why I tenaciously try to respond to every survey invitation that I get: I’m collecting examples for my library ofContinue reading… Three reasons why response from panels may not be what you want

Design tips for surveys 2012 – a seminar for UIE

When I was invited, as a Rosenfeld Media aspiring author, to talk about surveys for the UIE All You Can Learn series of seminars, I had to think hard about how to condense a full-day training workshop into something that would work forContinue reading… Design tips for surveys 2012 – a seminar for UIE

How to get yourself started in statistics

How do you feel about statistics? For a long time, I was a stats refusenik. When I was doing my first degree back in the 1970s, I took a class in mathematical statistics but it never made any sense toContinue reading… How to get yourself started in statistics