Here’s a question for you: what is your social security number?

If you’re from the US, you probably thought: “Why should I tell you that?” From elsewhere, you probably thought, “I don’t have one of those. Does it matter?”

Either reaction shows that simply asking a question—whether on a website, in a survey, or elsewhere—isn’t enough to guarantee an answer.

To get an answer, I’d have to persuade US readers to trust me; to feel comfortable that I have a legitimate reason for asking and that I’ll look after an important piece of personal data appropriately. And I’d have to persuade readers from elsewhere to trust that the effort of working out how to deal with that question will be repaid. Either way, trust is crucial. If you don’t trust me to make proper use of the data, you won’t want to give me that data, or make the effort of obtaining it for me.

Trust is influenced by experience

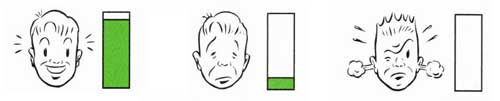

In Don’t Make Me Think! A Common Sense Approach to Web Usability, Steve Krug suggests that users come to each page or form with a ‘reservoir of goodwill’. Too low a level of goodwill and users give up on their tasks, or, for the more web-savvy, replace their accurate answers with a parallel set of answers that they maintain for this purpose. (The unkind might call this lying.) Either way, the result is poor data quality.

Based on a lifetime of experiments in survey administration and analysis, Don Dillman developed his “social exchange theory” described in Internet, Mail, and Mixed-mode Surveys. His theory has similarities to Krug’s reservoir of goodwill.

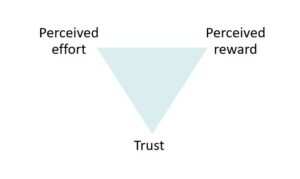

Dillman points out that higher response rates come from a balance between the user’s perception of the cognitive and emotional effort required compared to the social and financial rewards, all in the context of trust.

Dillman points out that higher response rates come from a balance between the user’s perception of the cognitive and emotional effort required compared to the social and financial rewards, all in the context of trust.

Dillman’s theory is based on extensive research, including one famous experiment that looked at response rates to a paper survey sent out with three different levels of incentive:

- No financial incentive

- Guaranteed $10 offered after return of the completed survey

- A $2 bill enclosed with the mailed paper survey.

Although the guaranteed $10 created a small increase in response compared to no incentive, the biggest uplift came from the $2 bill. Dillman explains this in terms of trust. There is a small immediate reward, but what the $2 bill really demonstrates is that the organisation issuing the survey trusts the respondent enough to hand out the reward in advance. And enough respondents feel the urge to reciprocate that trust to make an important contribution to response rates. Contrast that with the $10; even though it’s guaranteed, it requires that the respondent take the first step in demonstrating trust.

Put your questions into a credible context

Most of us in user experience are working on little bits of feedback such as “What did you think of this website today?”, or on forms that are as short as we can make them and still be consistent with their purpose.

It’s not practical for us to send our users an actual letter in advance of our questions, let alone one with real currency in it. The effort of opening an envelope, let alone reading the letter, would be disproportionate, increasing the perceived effort without enough corresponding increase in trust to justify it. Instead, the user’s experience of the website previous to answering your questions has to establish your credibility.

In our book Forms that Work, Gerry Gaffney and I point out that one small thing you can do to build trust is to give the user a form when a form is expected. That is, don’t offer a long list of instructions or hide the form away somewhere. Get right to it.

Without a prior notification or letter, the page of questions must stand alone as trustworthy in its own right, continuing the build-up of trust achieved by the website where it originated. If you hand your users over to a third-party service, such as a payment handler or a market research business for your satisfaction surveys, then you need to think carefully about whether to keep continuous visual branding or to opt for the branding of the third party.

People worry about how you use the data

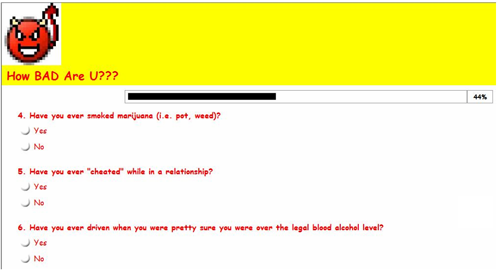

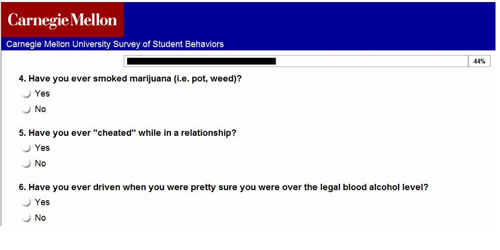

If it looks like you won’t use the data against them, users are more likely to trust you with it. There’s an interesting result from an experiment by Leslie K. John, Alessandro Acquisti, and George Loewenstein, who branded one version of the same questions as a cheap quiz in the typeface Comic Sans, and another version as an authoritative survey from a respected university. Teenagers were more likely to admit to illegal behaviors, such as dope smoking, when answering the cheap quiz.

Are you thinking, “Gullible teens can be trapped by anything?” Well, think again. The researchers achieved similar results with New York Times readers. They responded to a questionnaire branded as a “Test Your Ethics” quiz on a columnist’s blog, and they revealed more unethical behaviours than on the same questionnaire sponsored by a university.

Please don’t think that I advocate hiding your data collection activities behind Comic Sans quizzes. That’s a deceptive pattern that would be at best unprofessional and leans towards unethical.

- Strangers on a Plane: Context-Dependent Willingness to Divulge Sensitive Information by John, L. K., Acquisti, A., & Loewenstein, G. (2011). The Journal of Consumer Research

The questions influence trust

When I discuss the topic of trust in the context of surveys and forms with web-savvy audiences at conferences, they often claim to scan the questions ahead of time and then make a decision about whether they trust the organisation well enough to fill in the form.

I do not know of an experimental comparison that has investigated similar issues in a controlled context, but I have often observed users in usability tests who, on a multi-page form, opt to “fill in this form with any old thing” to explore the set of questions, with the intention of repeating the process with their real data at some point later. If they encounter questions that they consider inappropriate, they will be gone, never to return.

The question experience can affect trust in the brand

So far, we’ve been looking at the way that trust can affect the willingness to answer. If we turn that around, the question-and-answer process can also affect the longer term trust in the brand. Just as a bad experience with a form can empty that reservoir of goodwill, so can a good one refill it.

The longer term view leads me to a final thought—the dreaded “opt-in” questions that appear at the end of so many forms, such as those in this screenshot.

This table summarises the perceived effort and perceived reward from each of those questions.

| Question | Typical perceived effort | Typical perceived reward |

|---|---|---|

| Relationship effort: many of us have learned to guard our email addresses carefully, and revealing the email address is a compromise with our standards of privacy. | The opt-in question has no explanation of how the email address will be used; the link to the privacy policy is some distance away. The perceived reward is uncertain. | |

| Newsletter | Conversation effort: The sentence is a long one and takes a bit of reading. There is no promise as to how often the information will appear, so the user may be creating considerable future work. | The organisation offers four types of information. This could be regarded as useful and valuable, or it might be regarded as overwhelming. |

| Agreement to terms | Appearance effort: This tick box is unchecked, whereas the previous one was checked. The user has to notice the difference and realise that this box has to be checked. | Assuming that this form stands between the user and some perceived reward, there is benefit in checking this box. But will those terms and conditions be easy to understand and appropriate, or onerous? |

Questions like these are frequent, and you may well argue that they are familiar and that users cope with them perfectly well. (Or you may have been on the receiving end of similar, “They’ll cope, and we need that data,” instructions from clients and colleagues.)

Of course, both of those arguments are correct. Users will mostly cope with this sort of thing. But will they cope gladly? Isn’t there a risk that they’ll be left with a bit of a twinge, some minor disappointment that your organisation or brand has subjected them to the same old unthinking “opt-in” that they’ve suffered so many times before? Won’t that twinge slightly undermine their trust in you? Is the longer-term damage to the brand really justified by the value of the data?

Summary: Think about trust when asking questions

It’s important to think about trust when asking questions. A question isn’t just a question; it is part of a social exchange, a conversation that takes place within the context of what users expect from organisations and what they hope to achieve with that conversation.

When designing those exchanges, you should aim to:

- Create a trustworthy context. Make it clear who you are and why you are asking the questions.

- Be consistent. Make sure that the questions themselves are consistent with your organisation’s brand, and the purpose for which they are asked.

- Preserve trust for the next time. Avoid ending the question sequence with a series of minor irritations that can damage the perception of your organisation’s brand.

This article first appeared as Jarrett, C. (2012). “Do you trust me enough to answer this question?” Trust and Data Quality. User Experience Magazine, 11(4).

Featured image Trust by purplejavatroll, creative commons

#forms #formsthatwork #surveys #surveysthatwork

![A question that asks for email, followed by a box (already ticked) for Newsletter: Keep me up top date with [redacted] news, software updates and the latest information on products and services and a box (left blank) for "I agree to the Terms and Conditions (Required -See Link Below) Then there is a Continue button The screenshot ends with links to "Terms and condition" and "[redacted] Privacy Policy"](https://www.effortmark.co.uk/wp-content/uploads/2012/11/Opt-in-question.png)